Seeing through the noise

Seeing through the noise

I've been building Motion Defense for awhile now, and it's been public for almost a month. For everyone who doesn't know Motion Defense, it's security camera management software that lets you turn almost any camera or webcam into a security camera or baby monitor.

Any security camera software worth its salt will have motion detection. For an example, compare these two frames, captured half a second apart:

Here you have a cat walking through the living room, which correctly set off the motion detector. But how? You can pretty quickly spot the moving parts in the images above, but how does a computer tell if there's motion in a video feed?

The problem

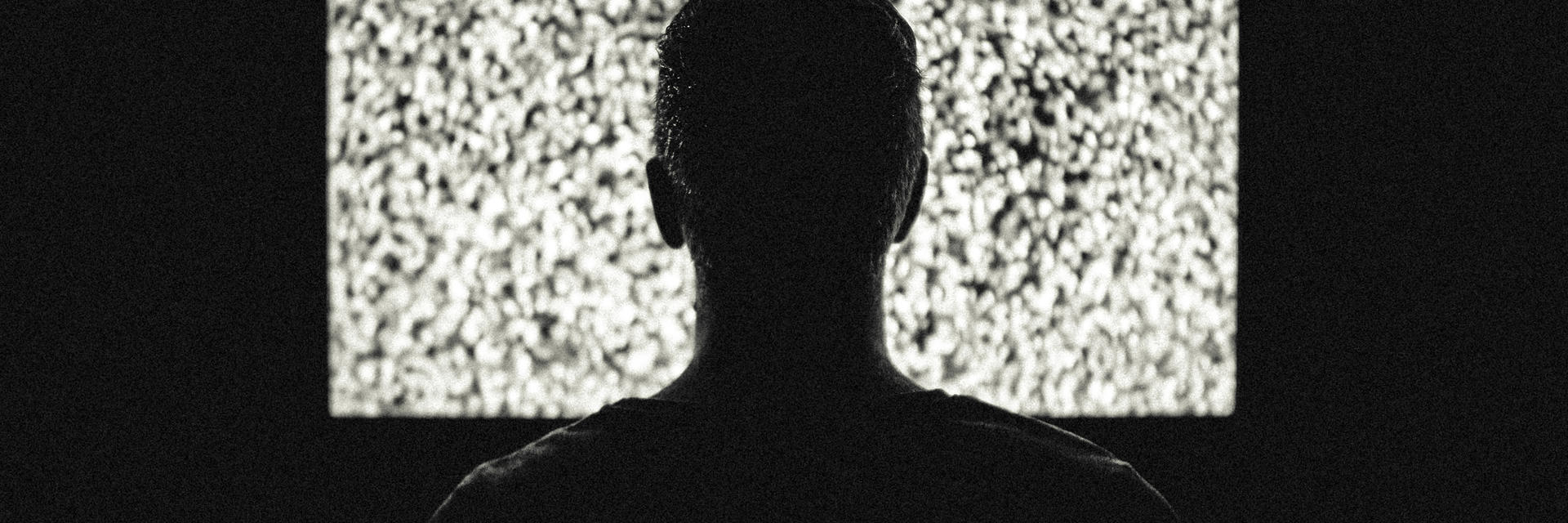

The simplest way for a computer to detect motion is to snap two images, and then compare the images, pixel by pixel. As an experiment, let's calculate the pixel differences between these two photos. We'll color pixels with identical average values between images black and changed pixels white. For speed, we're using each pixel's average RGB value.

Well that's not very helpful. There is a lot of white (changed) pixels around the cat, but everything else looks like static noise. So what happened?

Look closely at the images above again. There are lots of tiny artifacts all across the image. Nicer cameras may reduce this noise, but I haven't seen a camera yet that completely eliminates it.

What can we do to fix this?

There are a number of strategies to reduce noise when processing video for motion, but there are a couple methods that I use in Motion Defense that are pretty effective in reducing noise.

Let's look at these strategies individually, starting with the first. The idea behind this strategy is that most motion you want to detect will not be camouflaged against the backdrop. For our first comparison test, we took the average pixel value in each image, and compared them for equality. If we want to ignore "small" differences, we can compare the two pixel values and ignore small differences. Let's call this small difference a Color Threshold.

Here's an example. Pixel colors will have values between 0 and 255. Let's look at a hypothetical pixel A in two images, Let's say pixel A1 has a value of 150, and pixel A2 has a value of 160. That's less than a 4% delta over the 0-255 range, so it might be reasonable to just ignore it. Let's look at changes between the same images above, but ignore pixel changes less than 20.

This is so much more usable! There is still a bit of noise around the stairs though. It's not much, but imagine the same practice on an outdoor scene with considerably more random noise. How can we avoid this?

The second strategy is to just ignore any "changed" pixel that's not in a cluster of other "changed" pixels. Let's call the size of this cluster Noise Spread. Picture a Tic Tac Toe board of pixels. For the middle pixel to be considered "changed" in an image, the other 8 pixels surrounding it on the Tic Tac Toe board would also need to be changed.

It should be noted that this strategy is really supplemental to the first strategy. Because there is so much noise without the previous strategy, relatively few differing pixels would be surrounded by other differing pixels. Let's add a noise spread of 5 to the previous processed image:

That's better! There's still a few stray pixels, but increasing the noise spread further will also reduce presence of the cat's movement.

Wrapping up

Motion detection doesn't have to be rocket science, but computers need a little help to do it well. Motion Defense takes a pretty simple approach to motion detection in images:

- Compare the current video frame to the previous video frame

- Ignore pixels that are close in color value to their previous counterpart

- Ignore remaining pixels that aren't surrounded by other changed pixels

- If the average change percentage left on the image is large enough, consider it motion

In case you're curious, I've implemented the algorithms discussed above so you can play around with them below.